Margaret Mitchell - margarmitchell {at} gmail.com

Multi-Task Learning for Mental Health

using Social Media Text

Benton, A. and Mitchell, M. and Hovy, D. (2017) Multi-Task Learning for Mental Health using Social Media Text. Proceedings of EACL 2017.

Multi-task learning provides an opportunity to mix related tasks together, which can benefit all of the tasks, some of the tasks, or none of the tasks. Be ready for 'none of the tasks', there are a lot of details to get right. This paper discusses some of the things we did to move multi-task learning from being ineffective to effective, with a look towards the longer-term goals for this framework.

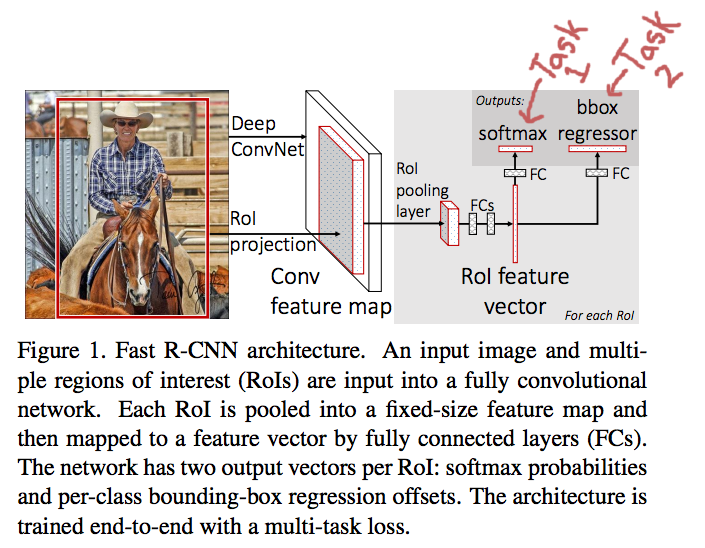

I first became aware of multi-task learning reading Ross Girshick's "Fast R-CNN" paper. In that work, Girshick was thinking about object detection, and specifically about the dual problems of object localization and object classification in an image. The idea felt natural following the recent successes in deep learning: Predict both the object location and class at once, modeled as two separate tasks using one network. Locating objects would be one task, with one loss; classifying object regions would be another task, with another loss. For each region, the forward pass of the network predicts:

- object class

- region offsets that form a bounding box (bounding box regression)

From Ross Girshick's Fast R-CNN paper.

I didn't think of it as multi-task learning at the time, but rather joint learning. In retrospect it's clearly multi-task: The network uses more than one loss, where each loss corresponds to a task. They are still trained jointly, though (very very jointly -- the whole network is shared until the two task output layers -- the Faster R-CNN work pulls things out further).

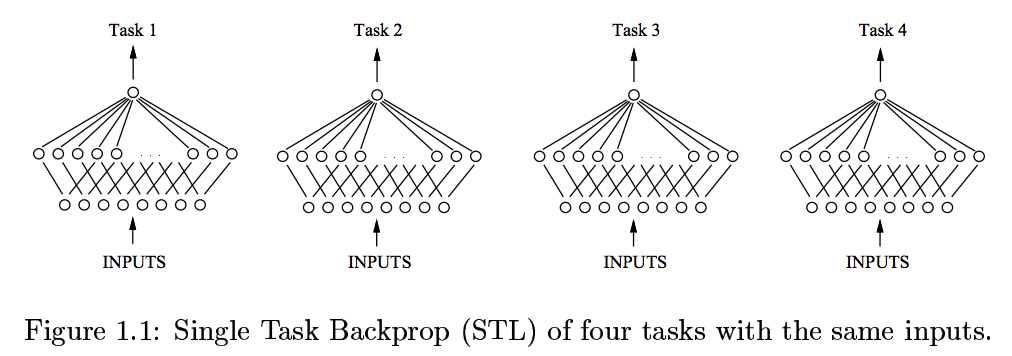

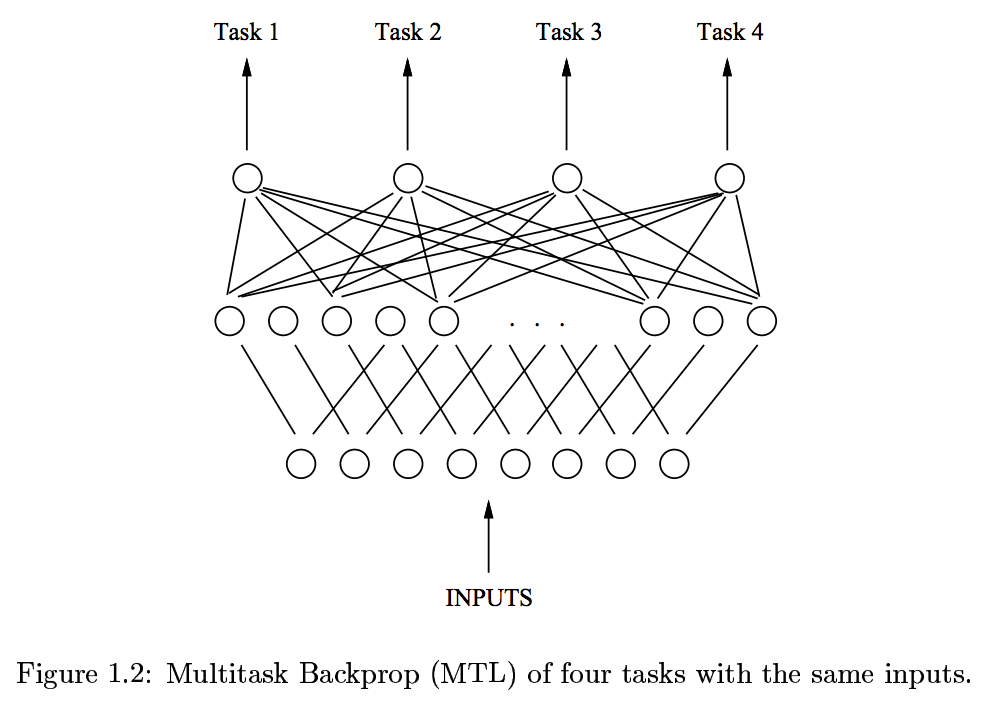

In 1993, Rich Caruana wrote a beautiful thesis on multi-task learning, where he explains some initial motivations for this approach: Generalization in neural networks improves when the network is trained to represent underlying regularities. It's a computational model of how solutions learned for one problem may help in learning solutions for another problem (like how learning to sand the floor / wax on, wax off helped in the Karate Kid).

From Rich Caruana's thesis, 1993.

One of the applications he proposed was in the medical domain, similar to our application in this paper. We were thinking about how to predict suicide risk (imminent risk) in a clinical care environment, using writing that a patient might be comfortable sharing with their clinican. This was part of the JSALT Workshop series on Detecting Risk and Protective Factors of Mental Health using Social Media Linked with Electronic Health Records. (Neither Dirk nor I are in the photo, because we were not cool enough, but Adrian is there, who was amazing to work with.)

In this domain, we were thinking about an application where someone with suicidal thoughts could use assistance from a clinical expert, on-call in a moment where suicide risk is high. A related application would be providing numbers that might help clinicians acquire increased care for a patient, e.g., to an insurance company that requires proof of increased need.

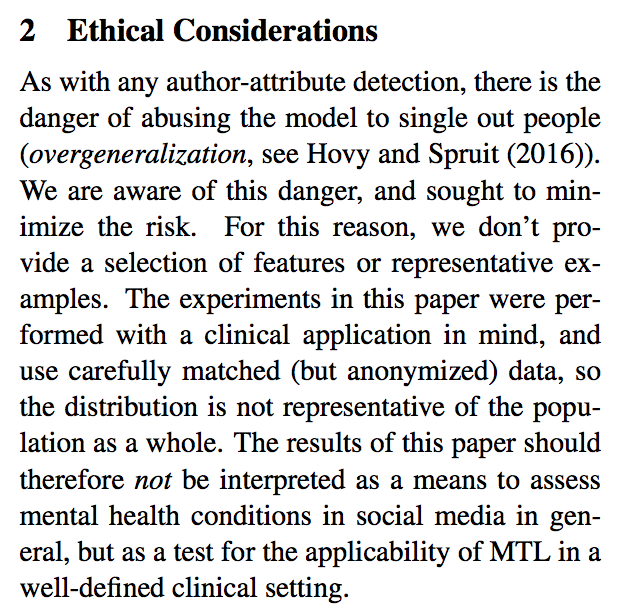

You can see how quickly ethical considerations start leaping up -- for example, dual use, or providing a means for ``armchair diagnoses'' of colleagues or potential hires through what might be exposed within the paper. I know, and Dirk too. So we organized an ACL workshop on Ethics in NLP, with other people interested in the topic, at the same conference where we're presenting the work (that last part is a coincidence). We have also added an Ethical Considerations section to the work, which also addresses why we will not be including examples.

We have tried to write this document through the lens of what might benefit patients, while describing an interesting general framework from an ML/NLP perspective.

To be presented in Valencia, Spain, at EACL 2017.

The work reported was started at JSALT 2016, and was supported by JHU via grants from DARPA (LORELEI), Microsoft, Amazon, Google and Facebook.